大文件分片上传

大文件分片上传

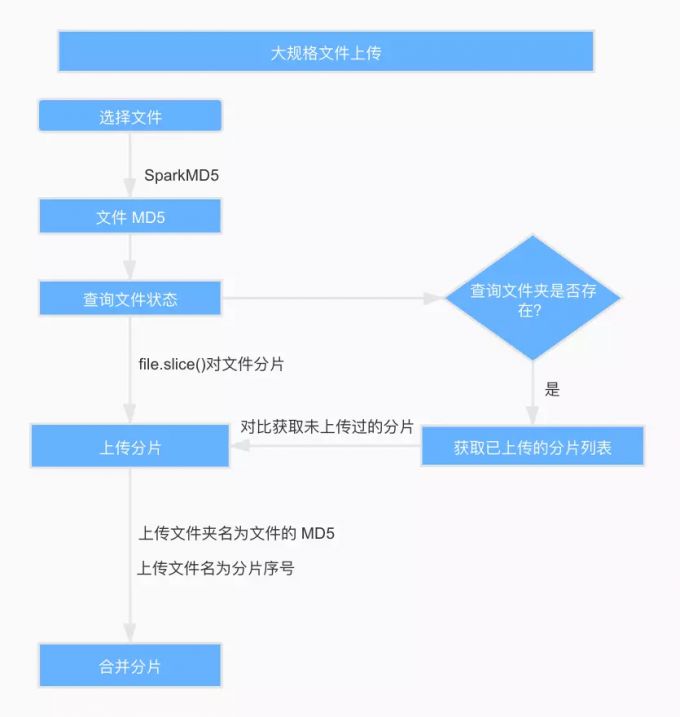

将一个大文件切片成多个小文件,并行请求接口进行上传,所有请求得到响应后,在服务器端合并所有的分片文件。当分片上传失败,可以在重新上传时进行判断,只上传上次失败的部分,减少用户的等待时间,缓解服务器压力。

文件 MD5 计算

根据文件的修改时间、文件名称、最后修改时间等信息,通过 spark-md5 生成文件的 MD5。需要注意的是,大规格文件需要分片读取文件,将读取的文件内容添加到 spark-md5 的 hash 计算中,直到文件读取完毕,最后返回最终的 hash 码到 callback 回调函数里面。

// 修改时间+文件名称+最后修改时间-->MD5

md5File (file) {

return new Promise((resolve, reject) => {

let blobSlice =

File.prototype.slice ||

File.prototype.mozSlice ||

File.prototype.webkitSlice

let chunkSize = file.size / 100

let chunks = 100

let currentChunk = 0

let spark = new SparkMD5.ArrayBuffer()

let fileReader = new FileReader()

fileReader.onload = function (e) {

console.log('read chunk nr', currentChunk + 1, 'of', chunks)

spark.append(e.target.result) // Append array buffer

currentChunk++

if (currentChunk < chunks) {

loadNext()

} else {

let cur = +new Date()

console.log('finished loading')

// alert(spark.end() + '---' + (cur - pre)); // Compute hash

let result = spark.end()

resolve(result)

}

}

fileReader.onerror = function (err) {

console.warn('oops, something went wrong.')

reject(err)

}

function loadNext () {

let start = currentChunk * chunkSize

let end =

start + chunkSize >= file.size ? file.size : start + chunkSize

fileReader.readAsArrayBuffer(blobSlice.call(file, start, end))

}

loadNext()

})

}

文件分片

前端得到文件的 MD5 后,从后台查询是否存在名称为 MD5 的文件夹,如果存在,列出文件夹下所有文件,得到已上传的切片列表,如果不存在,则已上传的切片列表为空。

// 校验文件的MD5

checkFileMD5 (file, fileName, fileMd5Value, onError) {

const fileSize = file.size

const { chunkSize, uploadProgress } = this

this.chunks = Math.ceil(fileSize / chunkSize)

return new Promise(async (resolve, reject) => {

const params = {

fileName: fileName,

fileMd5Value: fileMd5Value,

}

const { ok, data } = await services.checkFile(params)

if (ok) {

this.hasUploaded = data.chunkList.length

uploadProgress(file)

resolve(data)

} else {

reject(ok)

onError()

}

})

}

文件上传优化的核心就是文件分片,Blob 对象中的 slice 方法可以对文件进行切割,File 对象是继承 Blob 对象的,因此 File 对象也有 slice 方法。定义每一个分片文件的大小变量为 chunkSize,通过文件大小 FileSize 和分片大小 chunkSize 得到分片数量 chunks,使用 for 循环和 file.slice() 方法对文件进行分片,序号为 0 - n,和已上传的切片列表做比对,得到所有未上传的分片,push 到请求列表 requestList。

async checkAndUploadChunk (file, fileMd5Value, chunkList) {

let { chunks, upload } = this

const requestList = []

for (let i = 0; i < chunks; i++) {

let exit = chunkList.indexOf(i + '') > -1

// 如果已经存在, 则不用再上传当前块

if (!exit) {

requestList.push(upload(i, fileMd5Value, file))

}

}

console.log({ requestList })

const result =

requestList.length > 0

? await Promise.all(requestList)

.then(result => {

console.log({ result })

return result.every(i => i.ok)

})

.catch(err => {

return err

})

: true

console.log({ result })

return result === true

}

调用 Promise.all 并发上传所有的切片,将切片序号、切片文件、文件 MD5 传给后台。后台接收到上传请求后,首先查看名称为文件 MD5 的文件夹是否存在,不存在则创建文件夹,然后通过 fs-extra 的 rename 方法,将切片从临时路径移动切片文件夹中,结果如下:

// 上传chunk

upload (i, fileMd5Value, file) {

const { uploadProgress, chunks } = this

return new Promise((resolve, reject) => {

let { chunkSize } = this

// 构造一个表单,FormData 是 HTML5 新增的

let end =

(i + 1) * chunkSize >= file.size ? file.size : (i + 1) * chunkSize

let form = new FormData()

form.append('data', file.slice(i * chunkSize, end)) // file对象的slice方法用于切出文件的一部分

form.append('total', chunks) // 总片数

form.append('index', i) // 当前是第几片

form.append('fileMd5Value', fileMd5Value)

services

.uploadLarge(form)

.then(data => {

if (data.ok) {

this.hasUploaded++

uploadProgress(file)

}

console.log({ data })

resolve(data)

})

.catch(err => {

reject(err)

})

})

}

合并分片

上传完所有文件分片后,前端主动通知服务端进行合并,服务端接受到这个请求时主动合并切片,通过文件 MD5 在服务器的文件上传路径中找到同名文件夹。从上文可知,文件分片是按照分片序号命名的,而分片上传接口是异步的,无法保证服务器接收到的切片是按照请求顺序拼接。所以应该在合并文件夹里的分片文件前,根据文件名进行排序,然后再通过 concat-files 合并分片文件,得到用户上传的文件。至此大文件上传就完成了。

// 合并文件

exports.merge = {

validate: {

query: {

fileName: Joi.string().trim().required().description("文件名称"),

md5: Joi.string().trim().required().description("文件md5"),

size: Joi.string().trim().required().description("文件大小"),

},

},

permission: {

roles: ["user"],

},

async handler(ctx) {

const { fileName, md5, size } = ctx.request.query;

let { name, base: filename, ext } = path.parse(fileName);

const newFileName = randomFilename(name, ext);

await mergeFiles(path.join(uploadDir, md5), uploadDir, newFileName, size)

.then(async () => {

const file = {

key: newFileName,

name: filename,

mime_type: mime.getType(`${uploadDir}/${newFileName}`),

ext,

path: `${uploadDir}/${newFileName}`,

provider: "oss",

size,

owner: ctx.state.user.id,

};

const key = encodeURIComponent(file.key).replace(/%/g, "").slice(-100);

file.url = await uploadLocalFileToOss(file.path, key);

file.url = getFileUrl(file);

const f = await File.create(omit(file, "path"));

const files = [];

files.push(f);

ctx.body = invokeMap(files, "toJSON");

})

.catch(() => {

throw Boom.badData("大文件分片合并失败,请稍候重试~");

});

},

};